Deep Q-Learning - Lunar Lander¶

In this assignment, you will train an agent to land a lunar lander safely on a landing pad on the surface of the moon.

Outline¶

- 1 - Import Packages

- 2 - Hyperparameters

- 3 - The Lunar Lander Environment

- 4 - Load the Environment

- 5 - Interacting with the Gym Environment

- 6 - Deep Q-Learning

- 7 - Deep Q-Learning Algorithm with Experience Replay

- 8 - Update the Network Weights

- 9 - Train the Agent

- 10 - See the Trained Agent In Action

- 11 - Congratulations!

- 12 - References

1 - Import Packages¶

We'll make use of the following packages:

numpyis a package for scientific computing in python.dequewill be our data structure for our memory buffer.namedtuplewill be used to store the experience tuples.- The

gymtoolkit is a collection of environments that can be used to test reinforcement learning algorithms. We should note that in this notebook we are usinggymversion0.24.0. PIL.Imageandpyvirtualdisplayare needed to render the Lunar Lander environment.- We will use several modules from the

tensorflow.kerasframework for building deep learning models. utilsis a module that contains helper functions for this assignment. You do not need to modify the code in this file.

Run the cell below to import all the necessary packages.

import time

from collections import deque, namedtuple

import gym

import numpy as np

import PIL.Image

import tensorflow as tf

import utils

from pyvirtualdisplay import Display

from tensorflow.keras import Sequential

from tensorflow.keras.layers import Dense, Input

from tensorflow.keras.losses import MSE

from tensorflow.keras.optimizers import Adam

# Set up a virtual display to render the Lunar Lander environment.

Display(visible=0, size=(840, 480)).start();

# Set the random seed for TensorFlow

tf.random.set_seed(utils.SEED)

MEMORY_SIZE = 100_000 # size of memory buffer

GAMMA = 0.995 # discount factor

ALPHA = 1e-3 # learning rate

NUM_STEPS_FOR_UPDATE = 4 # perform a learning update every C time steps

3 - The Lunar Lander Environment¶

In this notebook we will be using OpenAI's Gym Library. The Gym library provides a wide variety of environments for reinforcement learning. To put it simply, an environment represents a problem or task to be solved. In this notebook, we will try to solve the Lunar Lander environment using reinforcement learning.

The goal of the Lunar Lander environment is to land the lunar lander safely on the landing pad on the surface of the moon. The landing pad is designated by two flag poles and it is always at coordinates (0,0) but the lander is also allowed to land outside of the landing pad. The lander starts at the top center of the environment with a random initial force applied to its center of mass and has infinite fuel. The environment is considered solved if you get 200 points.

3.1 Action Space¶

The agent has four discrete actions available:

- Do nothing.

- Fire right engine.

- Fire main engine.

- Fire left engine.

Each action has a corresponding numerical value:

Do nothing = 0

Fire right engine = 1

Fire main engine = 2

Fire left engine = 3

3.2 Observation Space¶

The agent's observation space consists of a state vector with 8 variables:

- Its $(x,y)$ coordinates. The landing pad is always at coordinates $(0,0)$.

- Its linear velocities $(\dot x,\dot y)$.

- Its angle $\theta$.

- Its angular velocity $\dot \theta$.

- Two booleans, $l$ and $r$, that represent whether each leg is in contact with the ground or not.

3.3 Rewards¶

The Lunar Lander environment has the following reward system:

- Landing on the landing pad and coming to rest is about 100-140 points.

- If the lander moves away from the landing pad, it loses reward.

- If the lander crashes, it receives -100 points.

- If the lander comes to rest, it receives +100 points.

- Each leg with ground contact is +10 points.

- Firing the main engine is -0.3 points each frame.

- Firing the side engine is -0.03 points each frame.

3.4 Episode Termination¶

An episode ends (i.e the environment enters a terminal state) if:

The lunar lander crashes (i.e if the body of the lunar lander comes in contact with the surface of the moon).

The lander's $x$-coordinate is greater than 1.

You can check out the Open AI Gym documentation for a full description of the environment.

4 - Load the Environment¶

We start by loading the LunarLander-v2 environment from the gym library by using the .make() method. LunarLander-v2 is the latest version of the Lunar Lander environment and you can read about its version history in the Open AI Gym documentation.

env = gym.make('LunarLander-v2')

Once we load the environment we use the .reset() method to reset the environment to the initial state. The lander starts at the top center of the environment and we can render the first frame of the environment by using the .render() method.

env.reset()

PIL.Image.fromarray(env.render(mode='rgb_array'))

In order to build our neural network later on we need to know the size of the state vector and the number of valid actions. We can get this information from our environment by using the .observation_space.shape and action_space.n methods, respectively.

state_size = env.observation_space.shape

num_actions = env.action_space.n

print('State Shape:', state_size)

print('Number of actions:', num_actions)

5 - Interacting with the Gym Environment¶

The Gym library implements the standard “agent-environment loop” formalism:

In the standard “agent-environment loop” formalism, an agent interacts with the environment in discrete time steps $t=0,1,2,...$. At each time step $t$, the agent uses a policy $\pi$ to select an action $A_t$ based on its observation of the environment's state $S_t$. The agent receives a numerical reward $R_t$ and on the next time step, moves to a new state $S_{t+1}$.

5.1 Exploring the Environment's Dynamics¶

In Open AI's Gym environments, we use the .step() method to run a single time step of the environment's dynamics. In the version of gym that we are using the .step() method accepts an action and returns four values:

observation(object): an environment-specific object representing your observation of the environment. In the Lunar Lander environment this corresponds to a numpy array containing the positions and velocities of the lander as described in section 3.2 Observation Space.reward(float): amount of reward returned as a result of taking the given action. In the Lunar Lander environment this corresponds to a float of typenumpy.float64as described in section 3.3 Rewards.done(boolean): When done isTrue, it indicates the episode has terminated and it’s time to reset the environment.info(dictionary): diagnostic information useful for debugging. We won't be using this variable in this notebook but it is shown here for completeness.

To begin an episode, we need to reset the environment to an initial state. We do this by using the .reset() method.

# Reset the environment and get the initial state.

initial_state = env.reset()

Once the environment is reset, the agent can start taking actions in the environment by using the .step() method. Note that the agent can only take one action per time step.

In the cell below you can select different actions and see how the returned values change depending on the action taken. Remember that in this environment the agent has four discrete actions available and we specify them in code by using their corresponding numerical value:

Do nothing = 0

Fire right engine = 1

Fire main engine = 2

Fire left engine = 3

# Select an action

action = 0

# Run a single time step of the environment's dynamics with the given action.

next_state, reward, done, info = env.step(action)

with np.printoptions(formatter={'float': '{:.3f}'.format}):

print("Initial State:", initial_state)

print("Action:", action)

print("Next State:", next_state)

print("Reward Received:", reward)

print("Episode Terminated:", done)

print("Info:", info)

In practice, when we train the agent we use a loop to allow the agent to take many consecutive actions during an episode.

6 - Deep Q-Learning¶

In cases where both the state and action space are discrete we can estimate the action-value function iteratively by using the Bellman equation:

$$ Q_{i+1}(s,a) = R + \gamma \max_{a'}Q_i(s',a') $$

This iterative method converges to the optimal action-value function $Q^*(s,a)$ as $i\to\infty$. This means that the agent just needs to gradually explore the state-action space and keep updating the estimate of $Q(s,a)$ until it converges to the optimal action-value function $Q^*(s,a)$. However, in cases where the state space is continuous it becomes practically impossible to explore the entire state-action space. Consequently, this also makes it practically impossible to gradually estimate $Q(s,a)$ until it converges to $Q^*(s,a)$.

In the Deep $Q$-Learning, we solve this problem by using a neural network to estimate the action-value function $Q(s,a)\approx Q^*(s,a)$. We call this neural network a $Q$-Network and it can be trained by adjusting its weights at each iteration to minimize the mean-squared error in the Bellman equation.

Unfortunately, using neural networks in reinforcement learning to estimate action-value functions has proven to be highly unstable. Luckily, there's a couple of techniques that can be employed to avoid instabilities. These techniques consist of using a Target Network and Experience Replay. We will explore these two techniques in the following sections.

6.1 Target Network¶

We can train the $Q$-Network by adjusting it's weights at each iteration to minimize the mean-squared error in the Bellman equation, where the target values are given by:

$$ y = R + \gamma \max_{a'}Q(s',a';w) $$

where $w$ are the weights of the $Q$-Network. This means that we are adjusting the weights $w$ at each iteration to minimize the following error:

$$ \overbrace{\underbrace{R + \gamma \max_{a'}Q(s',a'; w)}_{\rm {y~target}} - Q(s,a;w)}^{\rm {Error}} $$

Notice that this forms a problem because the $y$ target is changing on every iteration. Having a constantly moving target can lead to oscillations and instabilities. To avoid this, we can create a separate neural network for generating the $y$ targets. We call this separate neural network the target $\hat Q$-Network and it will have the same architecture as the original $Q$-Network. By using the target $\hat Q$-Network, the above error becomes:

$$ \overbrace{\underbrace{R + \gamma \max_{a'}\hat{Q}(s',a'; w^-)}_{\rm {y~target}} - Q(s,a;w)}^{\rm {Error}} $$

where $w^-$ and $w$ are the weights the target $\hat Q$-Network and $Q$-Network, respectively.

In practice, we will use the following algorithm: every $C$ time steps we will use the $\hat Q$-Network to generate the $y$ targets and update the weights of the target $\hat Q$-Network using the weights of the $Q$-Network. We will update the weights $w^-$ of the the target $\hat Q$-Network using a soft update. This means that we will update the weights $w^-$ using the following rule:

$$ w^-\leftarrow \tau w + (1 - \tau) w^- $$

where $\tau\ll 1$. By using the soft update, we are ensuring that the target values, $y$, change slowly, which greatly improves the stability of our learning algorithm.

Exercise 1¶

In this exercise you will create the $Q$ and target $\hat Q$ networks and set the optimizer. Remember that the Deep $Q$-Network (DQN) is a neural network that approximates the action-value function $Q(s,a)\approx Q^*(s,a)$. It does this by learning how to map states to $Q$ values.

To solve the Lunar Lander environment, we are going to employ a DQN with the following architecture:

An

Inputlayer that takesstate_sizeas input.A

Denselayer with64units and areluactivation function.A

Denselayer with64units and areluactivation function.A

Denselayer withnum_actionsunits and alinearactivation function. This will be the output layer of our network.

In the cell below you should create the $Q$-Network and the target $\hat Q$-Network using the model architecture described above. Remember that both the $Q$-Network and the target $\hat Q$-Network have the same architecture.

Lastly, you should set Adam as the optimizer with a learning rate equal to ALPHA. Recall that ALPHA was defined in the Hyperparameters section. We should note that for this exercise you should use the already imported packages:

from tensorflow.keras.layers import Dense, Input

from tensorflow.keras.optimizers import Adam

# UNQ_C1

# GRADED CELL

# Create the Q-Network

q_network = Sequential([

### START CODE HERE ###

### END CODE HERE ###

])

# Create the target Q^-Network

target_q_network = Sequential([

### START CODE HERE ###

### END CODE HERE ###

])

### START CODE HERE ###

optimizer = None

### END CODE HERE ###

# UNIT TEST

from public_tests import *

test_network(q_network)

test_network(target_q_network)

test_optimizer(optimizer, ALPHA)

Click for hints

# Create the Q-Network

q_network = Sequential([

Input(shape=state_size),

Dense(units=64, activation='relu'),

Dense(units=64, activation='relu'),

Dense(units=num_actions, activation='linear'),

])

# Create the target Q^-Network

target_q_network = Sequential([

Input(shape=state_size),

Dense(units=64, activation='relu'),

Dense(units=64, activation='relu'),

Dense(units=num_actions, activation='linear'),

])

optimizer = Adam(learning_rate=ALPHA)

6.2 Experience Replay¶

When an agent interacts with the environment, the states, actions, and rewards the agent experiences are sequential by nature. If the agent tries to learn from these consecutive experiences it can run into problems due to the strong correlations between them. To avoid this, we employ a technique known as Experience Replay to generate uncorrelated experiences for training our agent. Experience replay consists of storing the agent's experiences (i.e the states, actions, and rewards the agent receives) in a memory buffer and then sampling a random mini-batch of experiences from the buffer to do the learning. The experience tuples $(S_t, A_t, R_t, S_{t+1})$ will be added to the memory buffer at each time step as the agent interacts with the environment.

For convenience, we will store the experiences as named tuples.

# Store experiences as named tuples

experience = namedtuple("Experience", field_names=["state", "action", "reward", "next_state", "done"])

By using experience replay we avoid problematic correlations, oscillations and instabilities. In addition, experience replay also allows the agent to potentially use the same experience in multiple weight updates, which increases data efficiency.

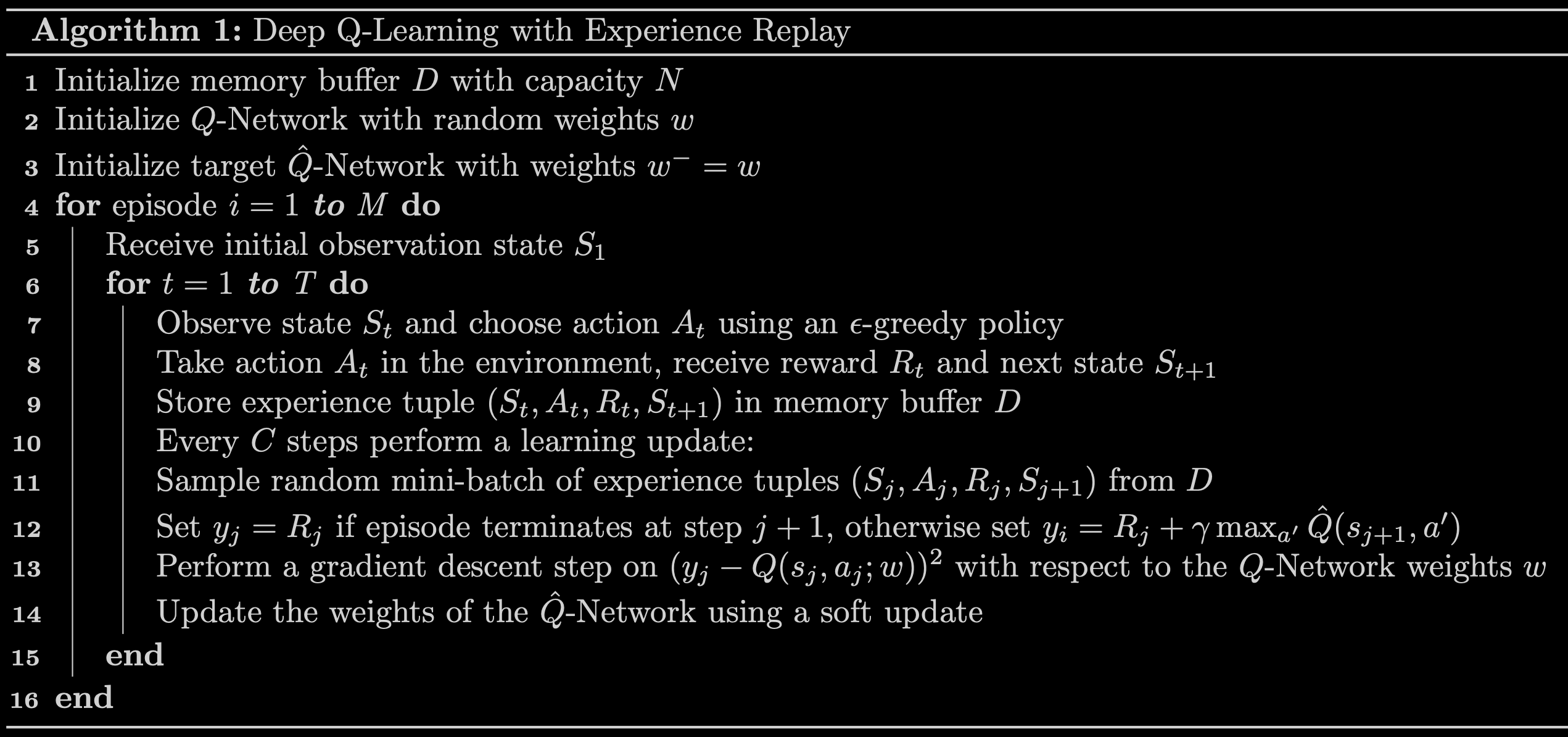

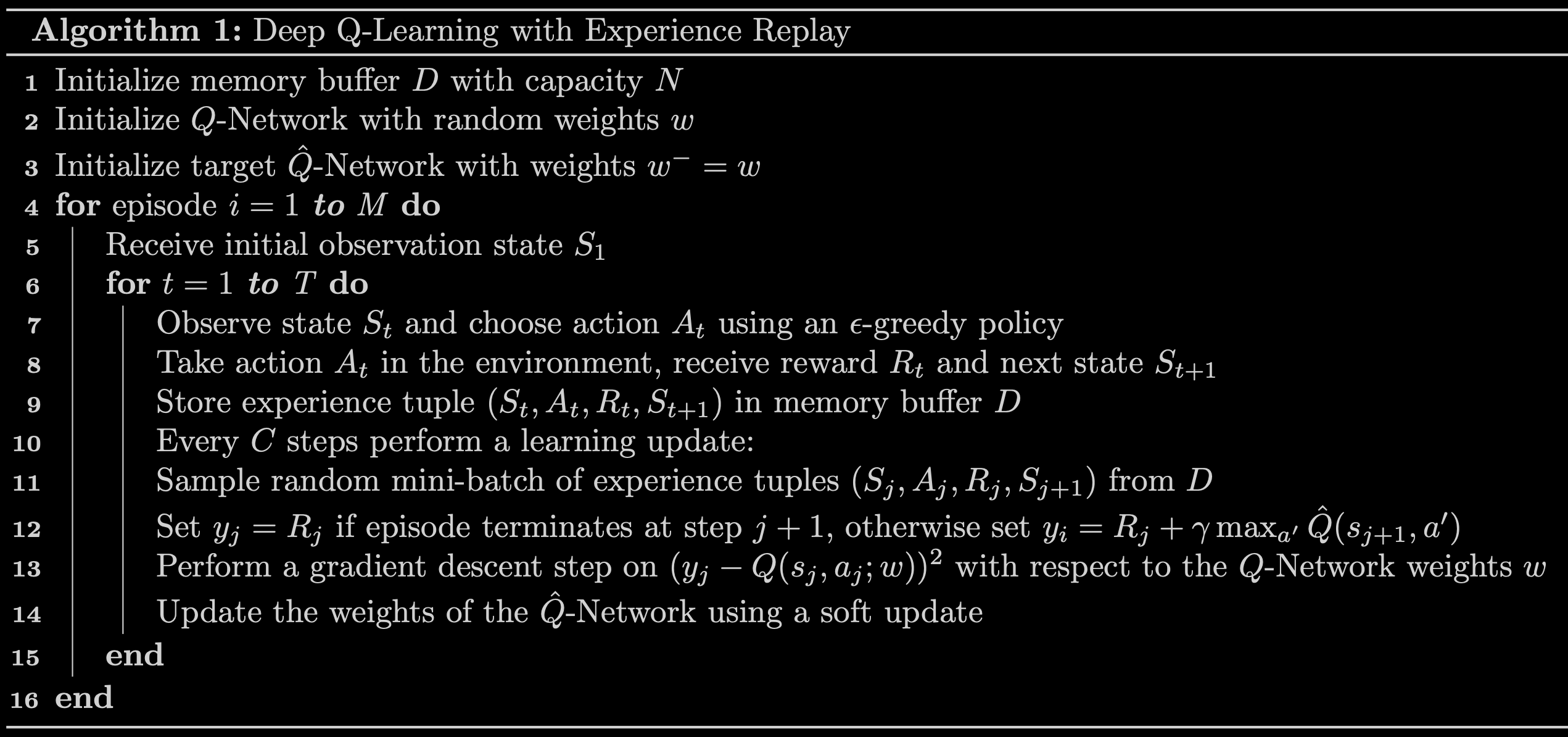

7 - Deep Q-Learning Algorithm with Experience Replay¶

Now that we know all the techniques that we are going to use, we can put them togther to arrive at the Deep Q-Learning Algorithm With Experience Replay.

Exercise 2¶

In this exercise you will implement line 12 of the algorithm outlined in Fig 3 above and you will also compute the loss between the $y$ targets and the $Q(s,a)$ values. In the cell below, complete the compute_loss function by setting the $y$ targets equal to:

$$ \begin{equation} y_j = \begin{cases} R_j & \text{if episode terminates at step } j+1\\ R_j + \gamma \max_{a'}\hat{Q}(s_{j+1},a') & \text{otherwise}\\ \end{cases} \end{equation} $$

Here are a couple of things to note:

The

compute_lossfunction takes in a mini-batch of experience tuples. This mini-batch of experience tuples is unpacked to extract thestates,actions,rewards,next_states, anddone_vals. You should keep in mind that these variables are TensorFlow Tensors whose size will depend on the mini-batch size. For example, if the mini-batch size is64then bothrewardsanddone_valswill be TensorFlow Tensors with64elements.Using

if/elsestatements to set the $y$ targets will not work when the variables are tensors with many elements. However, notice that you can use thedone_valsto implement the above in a single line of code. To do this, recall that thedonevariable is a Boolean variable that takes the valueTruewhen an episode terminates at step $j+1$ and it isFalseotherwise. Taking into account that a Boolean value ofTruehas the numerical value of1and a Boolean value ofFalsehas the numerical value of0, you can use the factor(1 - done_vals)to implement the above in a single line of code. Here's a hint: notice that(1 - done_vals)has a value of0whendone_valsisTrueand a value of1whendone_valsisFalse.

Lastly, compute the loss by calculating the Mean-Squared Error (MSE) between the y_targets and the q_values. To calculate the mean-squared error you should use the already imported package MSE:

from tensorflow.keras.losses import MSE

# UNQ_C2

# GRADED FUNCTION: calculate_loss

def compute_loss(experiences, gamma, q_network, target_q_network):

"""

Calculates the loss.

Args:

experiences: (tuple) tuple of ["state", "action", "reward", "next_state", "done"] namedtuples

gamma: (float) The discount factor.

q_network: (tf.keras.Sequential) Keras model for predicting the q_values

target_q_network: (tf.keras.Sequential) Karas model for predicting the targets

Returns:

loss: (TensorFlow Tensor(shape=(0,), dtype=int32)) the Mean-Squared Error between

the y targets and the Q(s,a) values.

"""

# Unpack the mini-batch of experience tuples

states, actions, rewards, next_states, done_vals = experiences

# Compute max Q^(s,a)

max_qsa = tf.reduce_max(target_q_network(next_states), axis=-1)

# Set y = R if episode terminates, otherwise set y = R + γ max Q^(s,a).

### START CODE HERE ###

y_targets = None

### END CODE HERE ###

# Get the q_values

q_values = q_network(states)

q_values = tf.gather_nd(q_values, tf.stack([tf.range(q_values.shape[0]),

tf.cast(actions, tf.int32)], axis=1))

# Compute the loss

### START CODE HERE ###

loss = None

### END CODE HERE ###

return loss

# UNIT TEST

test_compute_loss(compute_loss)

Click for hints

def compute_loss(experiences, gamma, q_network, target_q_network):

"""

Calculates the loss.

Args:

experiences: (tuple) tuple of ["state", "action", "reward", "next_state", "done"] namedtuples

gamma: (float) The discount factor.

q_network: (tf.keras.Sequential) Keras model for predicting the q_values

target_q_network: (tf.keras.Sequential) Karas model for predicting the targets

Returns:

loss: (TensorFlow Tensor(shape=(0,), dtype=int32)) the Mean-Squared Error between

the y targets and the Q(s,a) values.

"""

# Unpack the mini-batch of experience tuples

states, actions, rewards, next_states, done_vals = experiences

# Compute max Q^(s,a)

max_qsa = tf.reduce_max(target_q_network(next_states), axis=-1)

# Set y = R if episode terminates, otherwise set y = R + γ max Q^(s,a).

y_targets = rewards + (gamma * max_qsa * (1 - done_vals))

# Get the q_values

q_values = q_network(states)

q_values = tf.gather_nd(q_values, tf.stack([tf.range(q_values.shape[0]),

tf.cast(actions, tf.int32)], axis=1))

# Calculate the loss

loss = MSE(y_targets, q_values)

return loss

8 - Update the Network Weights¶

We will use the agent_learn function below to implement lines 12 -14 of the algorithm outlined in Fig 3. The agent_learn function will update the weights of the $Q$ and target $\hat Q$ networks using a custom training loop. Because we are using a custom training loop we need to retrieve the gradients via a tf.GradientTape instance, and then call optimizer.apply_gradients() to update the weights of our $Q$-Network. Note that we are also using the @tf.function decorator to increase performance. Without this decorator our training will take twice as long. If you would like to know more about how to increase performance with @tf.function take a look at the TensorFlow documentation.

The last line of this function updates the weights of the target $\hat Q$-Network using a soft update. Feel free to take a look at the utils module to see how the soft update is implemented.

@tf.function

def agent_learn(experiences, gamma):

"""

Updates the weights of the Q networks.

Args:

experiences: (tuple) tuple of ["state", "action", "reward", "next_state", "done"] namedtuples

gamma: (float) The discount factor.

"""

# Calculate the loss

with tf.GradientTape() as tape:

loss = compute_loss(experiences, gamma, q_network, target_q_network)

# Get the gradients of the loss with respect to the weights.

gradients = tape.gradient(loss, q_network.trainable_variables)

# Update the weights of the q_network.

optimizer.apply_gradients(zip(gradients, q_network.trainable_variables))

# update the weights of target q_network

utils.update_target_network(q_network, target_q_network)

9 - Train the Agent¶

We are now ready to train our agent. In the cell below we implement the Deep Q-Learning Algorithm with Experience Replay to train our agent to solve the Lunar Lander environment.

Note: With the default notebook parameters, the following cell takes between 10 to 15 minutes to run.

start = time.time()

num_episodes = 2000

max_num_timesteps = 1000

total_point_history = []

num_p_av = 100 # number of total points to use for averaging

epsilon = 1.0 # initial ε value for ε-greedy policy

# Create a memory buffer D with capacity N = MEMORY_SIZE

memory_buffer = deque(maxlen=MEMORY_SIZE)

# Set the target model weights equal to the q_network weights

target_q_network.set_weights(q_network.get_weights())

for i in range(num_episodes):

# Reset the environment to the initial state and get the initial state.

state = env.reset()

total_points = 0

for t in range(max_num_timesteps):

# Choose an action using an ε-greedy policy.

state_qn = np.expand_dims(state, axis=0) # state needs to be the right shape for the q_network

q_values = q_network(state_qn)

action = utils.get_action(q_values, epsilon)

# Take action A and receive reward R and the next state S'

next_state, reward, done, _ = env.step(action)

# Store experience (S,A,R,S') in the memory buffer D.

# We store the done variable as well for convenience.

memory_buffer.append(experience(state, action, reward, next_state, done))

# Only update the network every NUM_STEPS_FOR_UPDATE time steps.

update = utils.check_update_conditions(t, NUM_STEPS_FOR_UPDATE, memory_buffer)

if update:

# Sample random mini-batch of experience tuples (S,A,R,S') from D

experiences = utils.get_experiences(memory_buffer)

# Perform a gradient descent step and update the network weights

agent_learn(experiences, GAMMA)

state = next_state.copy()

total_points += reward

if done:

break

total_point_history.append(total_points)

av_latest_points = np.mean(total_point_history[-num_p_av:])

# Update the ε value

epsilon = utils.get_new_eps(epsilon)

print(f"\rEpisode {i+1} | Total point average of the last {num_p_av} episodes: {av_latest_points:.2f}", end="")

if (i+1) % num_p_av == 0:

print(f"\rEpisode {i+1} | Total point average of the last {num_p_av} episodes: {av_latest_points:.2f}")

# We will consider that the environment is solved if we get an

# average of 200 points in the last 100 episodes.

if av_latest_points >= 200.0:

print(f"\n\nEnvironment solved in {i+1} episodes!")

q_network.save('lunar_lander_model.h5')

break

tot_time = time.time() - start

print(f"\nTotal Runtime: {tot_time:.2f} s ({(tot_time/60):.2f} min)")

We can plot the point history to see how our agent improved during training.

# Plot the point history

utils.plot_history(total_point_history)

10 - See the Trained Agent In Action¶

Now that we have trained our agent, we can see it in action. We will use the utils.create_video function to create a video of our agent interacting with the environment using the trained $Q$-Network. The utils.create_video function uses the imageio library to create the video. This library produces some warnings that can be distracting, so, to suppress these warnings we run the code below.

# Suppress warnings from imageio

import logging

logging.getLogger().setLevel(logging.ERROR)

In the cell below we create a video of our agent interacting with the Lunar Lander environment using the trained q_network. The video is saved to the videos folder with the given filename. We use the utils.embed_mp4 function to embed the video in the Jupyter Notebook so that we can see it here directly without having to download it.

We should note that since the lunar lander starts with a random initial force applied to its center of mass, every time you run the cell below you will see a different video. If the agent was trained properly, it should be able to land the lunar lander in the landing pad every time, regardless of the initial force applied to its center of mass.

filename = "./videos/lunar_lander.mp4"

utils.create_video(filename, env, q_network)

utils.embed_mp4(filename)

11 - Congratulations!¶

You have successfully used Deep Q-Learning with Experience Replay to train an agent to land a lunar lander safely on a landing pad on the surface of the moon. Congratulations!

12 - References¶

If you would like to learn more about Deep Q-Learning, we recommend you check out the following papers.